What Is a Semaphore?

If I had truly understood and used it well, I wouldn't be living like this

The origin of the complications, which lead to such intricate solutions as the one described in section 2.2 is the fact that the indivisible accesses to common variables are always "one-way information traffic": an individual process can either assign a new value or inspect a current value. Such an inspection itself, however, leaves no trace for the other processes and the consequence is that, when a process want to react to the current value of a common variable, its value may be changed by the other processes between the moment of its inspection and the following effectuation of the reaction to it. In other words: the previous set of communication facilities must be regarded as inadequate for the problem at hand and we should look for better adapted alternatives.

Such an alternative is given by introducing a) among the common variables special purpose integers, which we shall call "semaphores". b) among the repertoire of actions, from which the individual processes have to be constructed, two new primitives, which we call the "P-operation" and the "V-operation" respectively. The latter operations always operate upon a semaphore and represent the only way in which the concurrent processes may access the semaphores.

The semaphores are essentially non-negative integers; when only used to solve the mutual exclusion problem, the range of their values will even be restricted to "0" and "1". It is the merit of the Dutch physicist and computer designer Drs.C.S.Scholten to have demonstrated a considerable field of applicability for semaphores that can also take on larger values. When there is a need for distinction, we shall talk about "binary semaphores" and "general semaphores" respectively. The definition of the P- and V-operation that I shall give now, is insensitive to this distinction.

— Dijkstra, EWD 123

Introduction

Semaphores are, in truth, a difficult concept. They sit at the foundation of synchronization problems and are among the first topics learned alongside mutexes, yet they are hard to grasp on first contact.

The usual summary of a semaphore goes something like this:

'A semaphore is a technique that resolves problems arising from unguaranteed synchronization during concurrent access to shared resources, by manipulating a counter that represents resource availability through two special atomic operations, P and V.'

That is the standard definition.

But what exactly is a shared resource? What is an atomic operation? What does resource availability mean, and what are P and V?

This post is an attempt to explain all of that.

The goal of this article is to build an understanding of the fundamentals of concurrent programming and of semaphores.

What Is a Shared Resource?

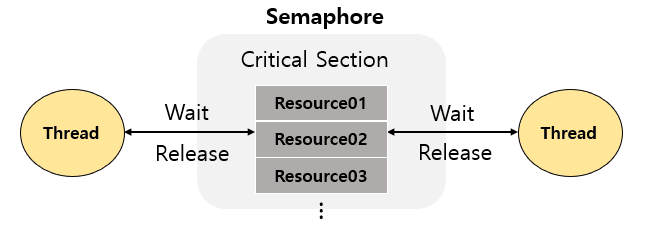

When a program runs in parallel, data that multiple threads or processes access simultaneously is called a 'shared resource'.

- Examples: variables in memory, file handles, network ports, queues, and so on.

A shared resource has state.

When multiple parties try to access and modify that state at the same time, unexpected errors can occur.

Let's Think About Shared Resources Using a Printer Analogy

Suppose there is a single printer. Imagine two people trying to use it at the same time.

- What if B starts printing while A's print job is still in progress?

- The output could be interrupted, garbled, or come out as blank pages.

-> As you can see, concurrent access to a shared resource causes conflicts!

Why Atomic Operations Are Necessary

So how can we solve this kind of problem?

The key is guaranteeing consistency of state even when multiple threads access it simultaneously, that is, securing 'atomicity.'

Take the operation 'x = x + 1', which adds 1 to a variable x. In reality it decomposes into three distinct steps:

1. Read the current value of x

2. Add 1 to it

3. Store the result back into x

Even this simple operation produces corrupted results if another process intervenes in the middle. For example, if two threads execute x = x + 1 simultaneously, the final result may increase by only 1 instead of 2, or in some cases increase by even less.

Let's look at a scenario where two threads perform x = x + 1 concurrently, expressed as an interleaving.

(Interleaving playground)

When two parties compete to change a value like this, the unexpected state that results is called a Race Condition.

Even what looks like a single-line operation actually consists of multiple steps, so we need a mechanism that blocks outside intervention during those steps.

The solution is atomic operations. An atomic operation is an indivisible unit of work that allows no external intervention whatsoever while it is executing.

Let's Return to the Printer Analogy

- When A sends a print request to the printer, B's request must wait until A's print job has fully completed, failed, or been cancelled.

- If B interrupts mid-print and starts its own output, the printouts may be mixed together or partially missing.

- Therefore, the next request (B) should be processed only after A's print state has clearly ended, so that consistency of the output state is guaranteed.

This is exactly what atomicity requires.

While printing is in progress, the printer's state must be locked as "in use,"

and the next job must not begin until a complete termination has occurred.

To handle state-based resources inside a program concurrently, we need an atomic control mechanism that cannot be interrupted mid-operation.

So, how is atomicity actually guaranteed? That is today's topic.

Dijkstra's Proposal: What Is a Semaphore?

*Special-purpose integers*

As the printer example showed, we need to be able to clearly control and observe the "in use" state from the outside. But ordinary variables alone cannot manage that state safely.

The reason is as follows:

- A variable can be read and written by anyone,

- and the value one process has just read can be changed by another process immediately afterward.

- In other words, in the time between reading a value and reacting to it, the state can change, and that change will not be reflected.

As this illustrates, the conventional approach has a structure in which the "check-decide-act" sequence is easily broken in the middle.

To overcome this structural limitation, Edsger W. Dijkstra proposed a new solution.

We introduce a special-purpose integer among shared variables; we call this a "semaphore."

— Dijkstra, EWD 123

What Is a Semaphore?

A semaphore is not a simple integer.

It is a state value with a special purpose, one whose external access is controlled.

The key point is "direct access is forbidden; control is exercised only indirectly through two operations, P and V."

among the repertoire of actions, from which the individual processes have to be constructed, two new primitives, which we call the "P-operation" and the "V-operation" respectively. The latter operations always operate upon a semaphore and represent the only way in which the concurrent processes may access the semaphores.

— Dijkstra, EWD 123

This special integer can be manipulated through only two operations:

- P operation (Proberen, "to test")

- V operation (Verhogen, "to increment")

These two operations follow these rules:

Operation | Meaning | Effect |

P(s) | wait / acquire | If the semaphore value is positive, decrement by 1 and proceed; if 0, wait |

V(s) | signal / release | Increment the semaphore value by 1 |

These operations are always performed atomically. That is, they execute in one shot with no interruption or intervention of any kind.

This is exactly the "mechanism that blocks outside intervention" we were looking for.

That is a semaphore.

Definition of the P / V Operations

A simple implementation looks like this.

// A semaphore is represented by an integer value s

typedef struct {

int s;

} Semaphore;

// P operation (wait): attempt to acquire a resource

void P(Semaphore *sem)

{

while (sem->s <= 0) {

// Wait (busy-waiting or blocking)

}

sem->s = sem->s - 1;

}

// V operation (signal): release a resource

void V(Semaphore *sem)

{

sem->s = sem->s + 1;

// After this, any waiting processes must be woken up

}One important point: in the pseudocode for P and V, the steps s = s - 1, s = s + 1, and the condition check while (s <= 0) wait; must execute as a single, indivisible atomic unit. If atomicity is broken, the same catastrophic race condition we saw with x = x + 1 will occur within the semaphore itself.

Note that the above is a conceptual representation; if you translate that code directly into C, it has a critical flaw. If another process slips in between the while check and s = s - 1, both threads can pass through the semaphore, creating a race condition.

In practice, to guarantee atomicity, you write it as shown below. (Of course, the code below borrows from the already-atomic pthread library to illustrate what P/V should look like when atomicity is guaranteed. How pthread_mutex itself is implemented is not covered at this level; the section "Where Does Atomicity Come From?" digs all the way down to the hardware.)

#include <pthread.h>

typedef struct {

int s;

pthread_mutex_t mutex;

pthread_cond_t cond;

} Semaphore;

// P operation: must be executed atomically

void P(Semaphore *sem)

{

pthread_mutex_lock(&sem->mutex);

while (sem->s <= 0) {

pthread_cond_wait(&sem->cond, &sem->mutex); // Wait using condition variable

}

sem->s = sem->s - 1;

pthread_mutex_unlock(&sem->mutex);

}

// V operation: must be executed atomically

void V(Semaphore *sem)

{

pthread_mutex_lock(&sem->mutex);

sem->s = sem->s + 1;

pthread_cond_signal(&sem->cond); // Wake up waiting process

pthread_mutex_unlock(&sem->mutex);

}For reference, the POSIX standard already provides sem_wait() and sem_post() in <semaphore.h>, so in practice you rarely need to implement semaphores yourself outside of educational contexts.

Where Atomicity Comes From: The Hardware Layer

But a question arises here. If P and V must be atomic, how is that atomicity itself guaranteed? If you protect a semaphore with a software lock, what protects that lock? This infinite regress ultimately ends at the hardware.

Every software synchronization primitive is built on top of atomic machine instructions provided by the CPU.

TAS (Test-And-Set): Reads a memory value and overwrites it with a specified value in a single operation, returning the old value.

CAS (Compare-And-Swap): Atomically executes "replace with the new value only if the current value matches the expected value." This is

CMPXCHGon x86 and the foundation of modern lock-free data structures.LL/SC (Load-Linked / Store-Conditional): A pair of instructions used on ARM and RISC-V. "Write the value only if no one has touched it since I read it."

These instructions obtain exclusivity at the memory-bus level. Software alone cannot achieve this. It is fair to say that all concurrency primitives, from semaphores and mutexes to spinlocks and lock-free queues, are ultimately an extension of a single CAS instruction.

The Problem of Busy-Waiting

Even if the hardware solves the atomicity problem, the while (s <= 0) in the pseudocode above still leaves a serious issue: busy-waiting (spin-waiting), where the CPU is continuously occupied until the condition is satisfied.

CPU waste: Time that could be spent on other useful work is consumed pointlessly.

Energy consumption: In mobile and embedded environments, this directly drains battery life.

Deadlock risk on a single-CPU system: If the waiting process does not release the CPU, the process that would call

Vcannot be scheduled, so the waiting process can never wake up. In other words, the act of waiting itself makes it impossible for the wait to end.

Modern operating systems therefore combine several mechanisms in layers to handle this "wait" process far more efficiently. This layered approach can be seen as the archetype of modern asynchronous processing.

Implementation Layers in Modern OSes

Sleep Queue (Wait Queue), Context Switching, Spinlock, Futex

The theoretical origin of all these approaches, daunting as they sound, is those simple P/V operations. Here is a brief explanation of how that theoretical origin is applied in a modern OS.

Spinlock: Short, Fast Protection

A spinlock is the simplest lock, built directly on top of hardware CAS. It is appropriate when the wait time is expected to be very short, and its advantage is that it has no context-switch overhead. Inside the kernel, spinlocks are used to protect the semaphore variable s or the sleep queue itself for very brief moments.

Sleep Queue and Context Switching: The Right Way to Wait Long

When a wait is expected to be long, there is no reason to keep holding the CPU.

When P(s) is called and s <= 0:

1. Change the current process state from RUNNING to BLOCKED

2. Insert the PCB (Process Control Block) into the semaphore’s sleep queue

3. Yield the CPU to the scheduler (trigger a context switch)

When V(s) is called:

1. Increment s by 1

2. Remove one process from the sleep queue and change its state from BLOCKED to READY

3. The scheduler will resume the process at an appropriate timeThe CPU does other work instead of the blocked process, so the waste of busy-waiting disappears. The trade-off is a context-switch cost (on the order of a few microseconds).

Futex: Linux's Hybrid Optimization

A Futex (Fast Userspace muTEX) is an optimization that handles "the majority of cases with no contention" without a system call.

Fast path (no contention): atomic update via CAS in user space, no system call

Slow path (contention occurs): enter the kernel sleep queue via

futex(FUTEX_WAIT), wake up viafutex(FUTEX_WAKE)

Because lock contention is rare in real production environments, this optimization eliminates most system-call overhead. glibc's pthread_mutex and C++'s std::mutex both use futex internally.

Putting it all together, semaphore operations in a modern OS can be summarized as follows.

Basic wait/wake: A sleep queue is used to efficiently put processes to sleep and wake them up, preventing busy-waiting.

Internal atomicity: During the brief code execution of the P and V operations themselves, spinlocks (inside the kernel) are used to safely modify the semaphore variable (

s) or the sleep queue. Those spinlocks ultimately rely on hardware CAS.Performance optimization (especially on Linux): By leveraging futex, accesses without contention are handled quickly in user space, and the kernel sleep queue is used only when contention occurs, reducing system-call overhead.

Binary Semaphore vs. General Semaphore

Category | Value Range | Use Case |

|---|---|---|

Binary Semaphore | 0 or 1 | Mutual exclusion for a single resource (similar use to a mutex) |

General Semaphore | Non-negative integer | Limited resource pools (DB connection pools, thread pools, producer-consumer buffers) |

It was the work of Dijkstra's colleague Dr. C. S. Scholten that proved the broader applicability of semaphores capable of holding larger values. This extended the semaphore from protecting a single resource to a general abstraction for managing N resources.

Semaphore vs. Mutex

At this point, readers who understand semaphores may be wondering how they differ from mutexes.

A binary semaphore and a mutex look similar on the surface but differ semantically.

Perspective | Semaphore | Mutex |

|---|---|---|

Core model | State (signal) based | Ownership based |

Who releases | Anyone can call | Only the thread that acquired it can release it |

Recursive acquisition | Not applicable (only decrements the value) | Recursive mutex allows the same thread to re-enter |

Priority inheritance | Generally not supported | Supported (mitigates priority inversion) |

Primary use | Signaling, resource counting | Critical section protection |

Key distinction: A semaphore is well-suited for signaling, as in producer-consumer patterns, while a mutex is suited for ownership declaration, meaning "this data structure is exclusively used by one thread."

A binary semaphore can be used as a substitute for a mutex, but it is inappropriate when real-time concerns like priority inversion come into play. That said, explaining mutexes properly requires explaining ownership, which risks taking this article off track, so I will cover that separately in a later post.

Back to the Printer: Controlling a Printer with a Semaphore

Applying a binary semaphore to the printer example looks like this:

1. The semaphore representing the printer's state starts at s = 1.

2. User A calls P(s), setting s = 0, and begins printing.

3. While printing is in progress, User B calls P(s), but since s <= 0, B waits.

4. After A finishes printing and calls V(s), s becomes 1, allowing B to print.

This is how a semaphore abstracts the "state" of a resource as a number,

and controls concurrency by changing that number atomically.

The binary semaphore described above is suited to a situation where there is only one printer in the office and it must be used exclusively by one person at a time (mutual exclusion).

s=1 meant 'printer available' and s=0 meant 'printer in use'.

But what if the office has several printers of equal capability (for example, 3)?

In that case, multiple people can use a printer at the same time, but access must be controlled so that the number of concurrent users does not exceed the number of available printers.

This is exactly when a General Semaphore (also called a Counting Semaphore) is used.

A general semaphore s holds a non-negative integer value representing the number of available resources.

Example: Controlling Three Printers with a General Semaphore

1. Initial state:

- Since there are 3 printers in the office, the semaphore s is initialized to 3: s = 3.

- This means "there are currently 3 printers available."

2. User A requests a printer (P(s) operation):

- Calls P(s).

- The current value of s (3) is greater than 0, so s is decremented by 1, making s = 2.

- User A is assigned a printer and begins printing. (2 printers are now available)

3. User B requests a printer (P(s) operation):

- Calls P(s).

- The current value of s (2) is greater than 0, so s is decremented by 1, making s = 1.

- User B is also assigned a printer and begins printing. (1 printer is now available)

4. User C requests a printer (P(s) operation):

- Calls P(s).

- The current value of s (1) is greater than 0, so s is decremented by 1, making s = 0.

- User C is also assigned a printer and begins printing. (0 printers are now available)

5. User D requests a printer (P(s) operation):

- Calls P(s).

- The current value of s (0) is less than or equal to 0, so User D is caught by the while (s <= 0) wait; condition inside the P(s) operation and enters a waiting state (waiting until a printer becomes available).

6. User A finishes printing and releases the printer (V(s) operation):

- User A finishes printing and calls V(s).

- s is incremented by 1, making s = 1. (1 printer is now available)

- When the V(s) operation completes, User D, who was waiting in the P operation, wakes up and checks the value of s again. Since s is now 1, User D sets s to 0 and begins using the printer.

The essence of a general semaphore is that it goes beyond a simple binary locked/unlocked state to control concurrent access to a pool of multiple limited resources and to accurately track the number of resources available.

(Printer example playground)

Closing Thoughts: Value as a Theoretical Origin

Semaphores remain central to kernel-level synchronization and stand as a prime example of the state-based access model.

Looking more broadly, modern concurrency primitives, including mutexes, condition variables, Go channels, Rust tokio task queues, and the JavaScript async/await runtime,

all share the same skeleton: "atomic state update + wait queue." The P/V operations Dijkstra introduced 60 years ago are the most bare-bones expression of that skeleton. Every implementation that followed is nothing more than performance optimizations (futex, lock-free data structures) and abstractions (async runtimes, channels) layered on top of it.

Therefore, the single-line requirement that "semaphore pseudocode must be atomic" is the archetype of a design constraint that runs through everything from the hardware CAS instruction to the scheduler of a modern runtime.

At first glance, a semaphore appears to be nothing more than a simple integer. But it is the most fundamental abstraction mechanism that allows a system to accurately represent the state of a resource, control it safely, and share it in a predictable way.

We must not forget that in this one small integer lies the ordering of countless processes, the avoidance of conflicts, and the stability of the entire system.