How Did Microservices Architecture Come to Be?

From Monolith to Microservices

(Building Microservices, a well-known introductory text on MSA)

Before we begin...

My own website, MAKONEA, is built on MSA,

and requests to "build it with a Microservices Architecture" are growing increasingly common in freelance projects as well.

Today it feels almost like an assumed norm, something you simply must do, but it was not always that way.

In this post, I want to trace why MSA emerged

and what problems it was chosen to solve,

following the thread through its historical context.

Technical choices always emerge from the limitations and failures of what came before.

To truly understand MSA,

we first need to look at what existed before it, and why those approaches fell short.

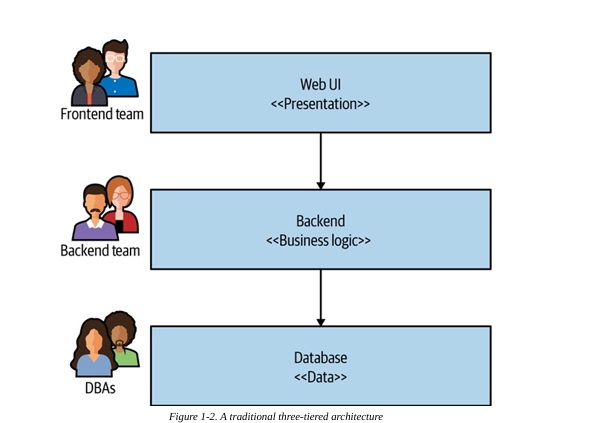

(A traditional Three-tier architecture Monolith image1)

1. Monolith

Many web applications have traditionally been designed around a Three-tier architecture.

The presentation layer handles the UI, the business logic layer handles rules and processing, and the data layer handles storage and retrieval.

Any organization that designs a system…will inevitably produce a design whose structure is a copy of the organization’s communication structure.

— Melvin Conway, “How Do Committees Invent?”

There is one more important point worth noting here. Why did the Three-tier architecture spread so widely in the first place? In Building Microservices, Sam Newman addresses this question by invoking Conway's Law: a system's structure reflects the communication structure of the organization that built it. Traditional IT organizations were structured around technical competencies, with separate database teams, backend development teams, and frontend development teams. Three-tier architecture was a direct reflection of that organizational structure. In other words, Three-tier prevailed not because it was the technically "correct" answer, but because it was a design optimized for the organizational structure of the time.

One important point deserves emphasis here: this separation into layers is a logical separation, not a separation of deployment units.

No matter how elegantly you slice your code into three tiers and apply design patterns, when you hit the build button everything gets bundled into a single binary and runs on a single process. That is a Monolith. The word comes from Greek: mono (one) + lith (stone), a massive structure carved from a single block.

The problem with a Monolith is not that it lacks structure. Its internal structure can be perfectly well organized. The problem is that the boundaries of change, deployment, scaling, and failure are all tied to a single application unit.

And that tight coupling is actually a strength when the system is small.

Network latency approaches zero, because calls between modules are in-memory function calls rather than network requests. You get perfect data consistency from a single database without ever having to think about distributed transactions. You can trace the entire code flow in one place. For an early product, a small team, or a rapid validation phase, this simplicity is the greatest advantage.

MSA evangelists (the bootcamp instructors and YouTubers) tempt you into slicing everything into tiny pieces from day one, but taking on network partitions and infrastructure complexity before your business has even been validated is a recipe for self-destruction.

Think about it: if you have no users, why make things more complicated? There is absolutely no reason. You would not redesign a stable with elaborate systems when there is not a single cow inside.

The first misconception to discard when trying to understand MSA is the idea that "Monolith is an outdated, bad architecture." A Monolith is not a failed architecture. It is an architecture whose cost structure changes once a system crosses a certain scale.

There are three points at which those costs rise.

Deployment coupling. Even fixing a single login bug requires redeploying the entire order module. The blast radius of every deployment is the whole system. Deployment cycles grow longer, and longer cycles lead teams to bundle even larger changes into a single release, which increases the risk further. It is a vicious cycle.

Scaling coupling. Even when traffic spikes on the product browsing service, you have to scale out the payment servers along with everything else. Because every feature lives in the same process, there is no way to selectively scale only the components that are actually under load.

Team coupling. As the team grows, everyone touches the same codebase. Merge conflicts, waiting for builds, and the constant question of "who changed this code?" become everyday realities. The larger the team, the more the cost of collaborating on a single codebase grows exponentially.

If your business has not yet been validated and you are not yet experiencing these three problems, a Monolith is the right choice.

MSA is a card you play at the point when those costs genuinely become too heavy to bear.

2. SOA: The First Attempt, and Its Failure

The first formal response to the limitations of the Monolith was SOA (Service-Oriented Architecture).

Mark Richards also describes SOA as "an architecture that was large, expensive, complex, and took far too long to implement."

The core idea behind this movement, driven by the enterprise sector in the early 2000s, was simple.

Split the system into services, and have those services communicate through standardized interfaces.

The direction was right. The problem was in how it was implemented.

SOA genuinely swept across the industry at the time. Many companies adopted the architecture to improve reusability and cross-organizational collaboration, but the results fell short of expectations. Design and implementation consumed an enormous amount of time, structures grew increasingly complex, and costs ballooned significantly. In the end, a large number of projects ended in failure, and the industry gradually turned away from SOA. To borrow Mark Richards's words, SOA was "an architecture that was large, expensive, complex, and took far too long to implement." This is the critical point: the problem was not the idea itself, but the way that idea was executed.

The standard SOA stack consisted of SOAP (Simple Object Access Protocol), WSDL (Web Services Description Language), and ESB (Enterprise Service Bus). In theory, the ESB was an intelligent middleware layer that centrally orchestrated communication between services, handling routing, transformation, and orchestration all in one place.

In practice, it was a different story.

As the ESB indiscriminately absorbed business logic and routing rules and grew bloated, individual services became tightly coupled to it. This was the trap of "smart pipes, dumb endpoints": the cohesion of individual services collapsed, and control over the system flowed back toward the center. Teams had broken apart the Monolith to build a distributed system, yet ended up re-erecting a massive Single Point of Failure (SPOF) at the center, now under the name ESB.

SOAP and WSDL were an XML schema nightmare. Adding a single service required writing a WSDL, generating stubs, and aligning contracts on both sides, a process that could take days. The gap between SOA's promise that "services should be easy to add" and the reality on the ground was vast.

SOA's failure was ultimately a failure of philosophy rather than technology. Services were separated, but coupling was never meaningfully reduced; in trying to eliminate complexity, teams introduced a new form of complexity in the ESB.

The microservice community favours an alternative approach: smart endpoints and dumb pipes. Applications built from microservices aim to be as decoupled and as cohesive as possible. -Martin Fowler2

The lessons from that failure became the negative example that shaped MSA. This is precisely why MSA enshrines "smart endpoints, dumb pipes" as a core principle.

3. Amazon's Turning Point: The 2002 Bezos Mandate

In 2002, while SOA was racing toward failure in the enterprise world, a historic internal email was circulating inside Amazon.

(Recollection of Amazon's internal directive, as shared publicly by Steve Yegge3)

This internal directive is known today as the "Bezos Mandate" or "Bezos API Memo." Its key points are as follows.

- Every team must expose its data and functionality through service interfaces.

- Teams must communicate only through those interfaces.

- No other form of communication is permitted. No direct linking, no direct database reads, no shared memory models.

- It doesn't matter what technology you use. HTTP, Corba, Pubsub, whatever.

- No exceptions.

- Anyone who doesn't comply will be fired.

What makes this mandate significant is not the technology stack it prescribed, but the force behind it. At the time, Amazon was growing on top of a massive monolithic system. Teams were directly reading each other's databases and bolting on features by linking libraries directly, with no internal APIs in between. The Bezos Mandate severed those connections. It placed every capability behind an API and required all inter-team communication to go through network-based service interfaces. Initially, it was simply a rule to reduce internal coupling. But this change prompted Amazon to start thinking of its own internal capabilities as "services." As Yegge put it, Amazon transformed into an organization that approached every design decision services-first. Building on that foundation, infrastructure capabilities also became services deliverable via API, laying the groundwork for AWS and leading to the launch of S3 and EC2 in 2006. An internal rule created to break apart a monolith ended up becoming one of the critical foundations of the cloud computing era.

4. Netflix: Resilience Built from Chaos

In 2008, Netflix suffered a serious database corruption incident.

(Netflix's retrospective post4)

In a 2016 retrospective, Netflix explained that the 2008 database corruption incident brought DVD shipping to a halt for three days,

and that this event became the starting point for a seven-year migration to AWS.

DVD shipments were stopped for three days. That incident left Netflix's engineering team with two lasting lessons.

First, a centralized monolithic system means that a single failure can bring down the entire service. Second, failures cannot be prevented; you must build systems that survive them.

In 2009, Netflix began migrating from its data centers to AWS. This was not a simple infrastructure move. It was a seven-year journey to decompose a monolithic system into hundreds of microservices.

In the process, Netflix built several key tools.

On Monday, the company open sourced its “Chaos Monkey,” software that randomly turns off virtual machines running beneath its streaming service, a way of simulating the small outages the service will inevitably face day after day. This means that anyone can use the tool or even modify its source code. -- Wired, “Netflix Abuses Amazon With Monkeys. Now You Can Too”, 2012 5

Chaos Monkey. An automated tool that randomly terminates instances in the production environment. It started from the idea that "the best way to verify whether a system can withstand failure is to actually cause one." The very existence of Chaos Monkey compelled engineers to write code that assumed failures would happen.

Eureka. A service registry. It solved the problem of how hundreds of dynamically spinning up and shutting down service instances could discover one another.

(Netflix blog post introducing Hystrix6)

Hystrix. A circuit breaker library. When a dependent service slows down or goes down, it fails requests to that service fast (Fail Fast), preventing the failure from propagating across the entire system.

Netflix open-sourced these tools in 2012. This collection, known as Netflix OSS, became the reference implementation for "how to actually operate microservices" at the time. The patterns Netflix created went on to form the standard vocabulary of MSA.

5. "Microservices" — The Day It Got a Name

Netflix, eBay, Amazon, LinkedIn, Google: organizations running large-scale systems were decomposing their systems in similar ways, each under a different name. These patterns needed a name.

In May 2011, software architects gathered near Venice, Italy shared the new architectural patterns each had independently developed and began calling the approach "microservice."7 James Lewis then presented the concept formally at a conference in 2012, and by 2014, an article he co-authored with Martin Fowler had cemented it as the industry's standard terminology.

A collection of small services, each with a single responsibility

Isolated as separate processes and communicating through lightweight mechanisms such as HTTP APIs

Each service independently deployable

Each service can use a different language and a different data store from the others (Polyglot)

Autonomy of each service takes priority over centralized orchestration

Worth noting is that this article did not "invent" MSA. Amazon, Netflix, and eBay had already been running this pattern for years. The contribution of Fowler and Lewis was giving the industry a common language. Once the pattern had a name, a community formed around it, and the pattern spread rapidly.

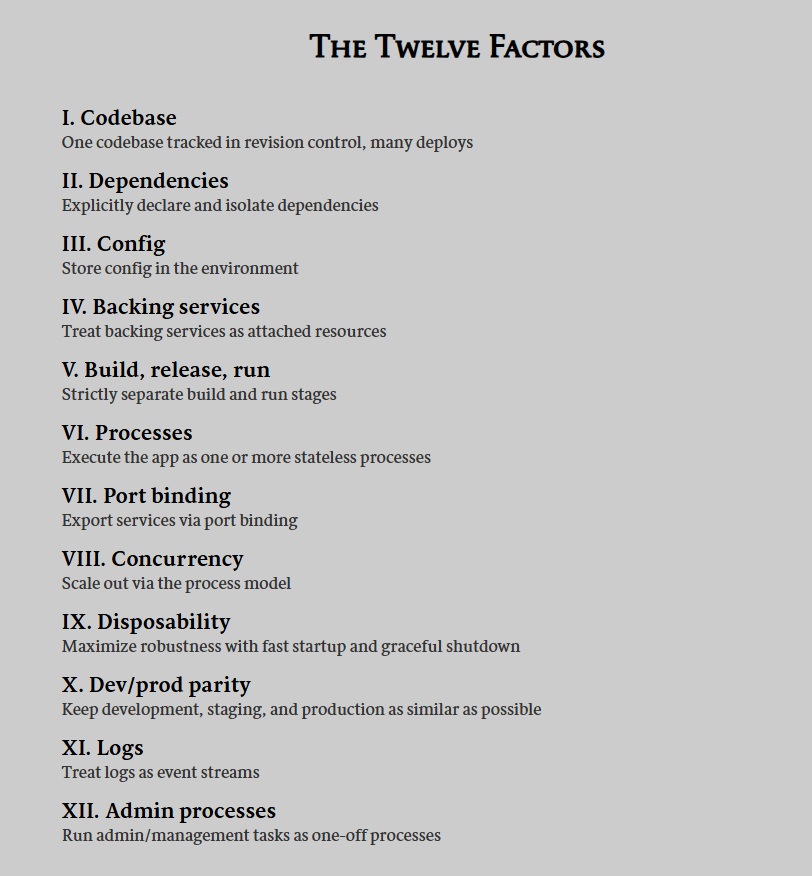

Also in 2011, Adam Wiggins of Heroku published the Twelve-Factor App8 methodology: twelve principles to follow when building SaaS applications in cloud environments.

Codebase, dependencies, configuration, backing services, stateless processes, and more, these principles spelled out the operational foundation that MSA needs in order to actually work.

6. The Container Revolution: Infrastructure Catches Up

There was a wide gap between understanding MSA as a concept and actually operating it in production. Deploying, isolating, and versioning hundreds of services was too complex and too expensive with the infrastructure that existed at the time.

In 2013, Docker solved that problem.

"Hey everyone... I'm Solomon, I work at dotCloud[00:08]... we've been working on open sourcing that [Linux containers] and we haven't shown it to anyone[02:06]... this is actually the first time we show anything outside of the dotCloud office so it's probably going to blow up on me.[02:11]" - Solomon Hykes,

When Solomon Hykes introduced Docker at PyCon 2013 with a five-minute demo, many in the audience responded with something like, "I'm not sure what this is, but it feels important." The concept of containers itself was not new; Linux cgroups and namespaces already existed. Docker's innovation was turning containers into a tool that developers could actually use.

After Docker, it became possible to package each MSA service as an independent container, isolating dependencies inside an image and running that same image identically across development and production environments.

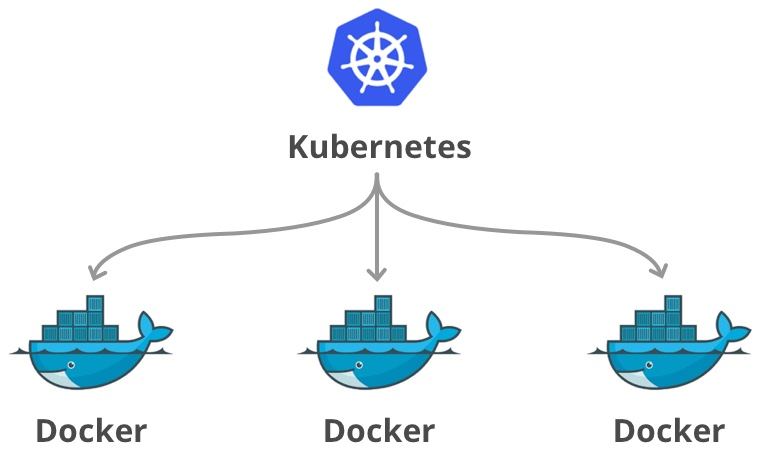

That revealed the next problem: how do you manage hundreds of containers?

In 2014, Google announced Kubernetes.

We present a summary of the Borg system architecture and features, important design decisions, a quantitative analysis of some of its policy decisions, and a qualitative examination of lessons learned from a decade of operational experience with it.

Reference: Large-scale cluster management at Google with Borg (2015)10

Google had already been running an internal system called 'Borg' for over a decade, orchestrating and managing tens of billions of containers every week.

Kubernetes is the direct heir to that Borg legacy.

The combination of Docker and Kubernetes made Microservices Architecture viable in practice. Things that previously required an infrastructure team at Netflix's scale became achievable by small teams as well.

Aside: Hidden Metaphors and Wordplay in the Names

The word "Kubernetes" itself comes from ancient Greek, meaning "helmsman" or "captain." Embedded in this choice is a remarkably rich worldview and metaphor on Google's part.

Recall Docker's logo: a whale (the vessel) swimming through the sea with containers (cargo) stacked on its back. Kubernetes signals its intent to serve as the helmsman commanding the entire fleet, ensuring that the hundreds or thousands of container boxes (Docker containers) drifting across that sea reach their correct destinations without tangling. (The official Kubernetes logo is, fittingly, a blue ship's steering wheel.)

Kubernetes is also commonly abbreviated as 'k8s' because there are exactly 8 letters (u-b-e-r-n-e-t-e-s) between the 'K' and the 's', so the middle is collapsed into that digit. In English-speaking communities it is typically read aloud as "K-eights."

This style of abbreviation is actually a well-established convention in the tech industry known as a "numeronym." The idea is simple: typing out a long word every time is tedious, so you keep the first and last letters and replace everything in between with a number indicating how many letters were dropped.

i18n: internationalization → i + 18 letters + n

a11y: accessibility → a + 11 letters + y

o11y: observability → o + 11 letters + y

It is worth noting, however, that K3s, the widely used lightweight distribution for local and edge environments, is not an abbreviation in this sense. The developers at Rancher Labs (now SUSE) who created K3s set out with the goal of "building a version of Kubernetes with exactly half the memory footprint and binary size," and the name reflects that ambition rather than a numeronym pattern.

Half of 10 letters (Kubernetes) is 5, and if you compress those 5 letters using the numeronym rule described earlier, you get K + 3 letters + s = k3s. The logic is nothing short of miraculous.

This is precisely why software development is so much harder for developers who didn't grow up in an English-speaking context.

7. The Present: Emerging Challenges

As MSA spread, a new set of problems moved to the forefront.

Observability. When a single request passes through 10 services before completing, how do you know which service introduced the latency? In a single process, one stack trace tells the whole story, but in MSA the trail goes cold at every service boundary. Distributed tracing tools such as Jaeger, Zipkin, and OpenTelemetry were built specifically to address this problem.

Service Mesh. As the number of services grows, implementing inter-service communication policies (encryption, retries, timeouts, circuit breakers) inside each individual service becomes impractical. Istio, Envoy, and Linkerd extract this logic into a sidecar proxy and handle it outside the service's own code.

Distributed Monolith. This is the most common trap in MSA. You split the system into multiple services, but those services call one another synchronously in a long chain: A calls B, B calls C, C calls D. In practice, a single request passes through every service in series. If one service slows down, the entire chain stalls. You have simply moved the monolith into a distributed environment; the coupling has not decreased at all.

The reason this trap matters is that MSA's core goals, independent deployment and independent scaling, both depend entirely on drawing service boundaries correctly. Draw them wrong and you inherit all the complexity of distribution while gaining none of the benefits.

An important point here is that MSA should not be viewed simply as "a better architecture." The progression from Monolith to SOA to MSA is not a journey toward a more correct answer; it is a process of choosing a different kind of complexity at each stage. Programming is fundamentally about reducing the space of possible states, and architecture is no different. Every line of code constrains your degrees of freedom, and programming is the ongoing act of applying those constraints and asking how well they align with your actual requirements. The Monolith traded away deployment and scaling freedom in exchange for simplicity. SOA attempted service separation but chose centralized control. MSA gave up centralization in exchange for accepting operational complexity. MSA is therefore not "a better structure" but the result of choosing a different set of constraints.

Closing: Patterns That Emerge from History

The history of MSA can be summarized in a single sentence.

An architecture that started from the coupling problems of the Monolith, avoided the excessive centralization of SOA, and ultimately settled into a practical form on top of cloud and container infrastructure.

There is a pattern that keeps emerging from this history.

Era | Key Technologies | Problems Solved | Problems Created |

|---|---|---|---|

Early 2000s | Monolith | Simplicity, rapid development | Deployment / scaling / team coupling |

2002~2008 | SOA + ESB | Attempted service separation | ESB centralization, SOAP complexity |

2009~2013 | Early MSA (Netflix, Amazon) | True independent deployment and scaling | Operational complexity, lack of observability |

2013~2015 | Docker + Kubernetes | Container-based infrastructure standardization | Orchestration learning curve |

2016~Present | Service Mesh, Observability | Inter-service communication policy + visibility | Increased complexity |

Each layer solved the problems of the previous layer while creating new ones. Technological evolution has always worked this way.

So when someone asks, "Should we move to MSA too?", the question itself is wrong. There is no unconditional right answer in architecture, and no Silver Bullet.

The right question is this: "Given our current business scale and organizational structure, do we urgently need the freedom in deployment and scaling that MSA offers, enough to pay the enormous bill of 'operational complexity' it demands?"

If there are no cattle in the barn at all, the simplicity of a Monolith is the right answer. But if traffic and your development organization have grown explosively and you have reached a breaking point where the existing coupling can no longer be tolerated, then you must willingly embrace the complexity of containers and distributed systems.

Ultimately, designing system architecture is not a journey to find a perfect structure. It is simply the act of wisely choosing the kind of pain your organization can bear right now, or must bear.

Yet people repeatedly make the wrong choices.

They adopt MSA not because they have a problem, but because it looks impressive. They stick with a Monolith not because it fits, but because it feels familiar. Technology is a matter of choice, but most failures come not from the choice itself, but from the judgment behind it.

Footnotes

- Building Microservices: Designing Fine-Grained Systems [2nd ed.] Fig 1.2 ↩

- [1] James Lewis, Martin Fowler, "Microservices", 2014 https://martinfowler.com/articles/microservices.html ↩

- https://gist.github.com/chitchcock/1281611 ↩

- https://about.netflix.com/en/news/completing-the-netflix-cloud-migration ↩

- https://www.wired.com/2012/07/netflix-4/ ↩

- https://netflixtechblog.com/introducing-hystrix-for-resilience-engineering-13531c1ab362 ↩

- [1] Nicola Dragoni et al., "Microservices: yesterday, today, and tomorrow", 2017. ↩

- Adam Wiggins, "The Twelve-Factor App" https://12factor.net/ ↩

- https://www.youtube.com/watch?v=wW9CAH9nSLs ↩

- https://research.google/pubs/large-scale-cluster-management-at-google-with-borg/ ↩