Generic programming centers around the idea of abstracting from concrete, efficient algorithms to obtain generic algorithms that can be combined with different data representations to produce a wide variety of useful software. For example, a class of generic sorting algorithms can be defined which work with finite sequences but which can be instantiated in different ways to produce algorithms working on arrays or linked lists.

Four kinds of abstraction—data, algorithmic, structural, and representational—are discussed, with examples of their use in building an Ada library of software components. The main topic discussed is generic algorithms and an approach to their formal specification and verification, with illustration in terms of a partitioning algorithm such as is used in the quicksort algorithm. It is argued that generically programmed software component libraries offer important advantages for achieving software productivity and reliability.

Alexander A.Stepanov 1988

What Is Generic Programming?

Generic programming can be summarized as a programming approach that focuses on the technique of allowing a single piece of code to work with multiple data types rather than being bound to a specific one,

thereby increasing reusability.

So where should we begin? Let's find that starting point.

We will start by asking why Generic Programming emerged.

Generic programming began as a differentiation from OOP, which was on the rise in the 1980s.

In the early 1980s, OOP began to dominate programming, centered around the concepts of 'objects' and 'classes'.

However, this approach had clear limitations.

1. Type-dependent code: Classes were written to operate only on specific data types.

For example, a function that sorts an integer array and a function that sorts a string array share the same algorithm, yet they had to be written separately.

2. Limited reusability: OOP could address some problems through inheritance and polymorphism, but constraints on code reusability remained.

Generic programming emerged to overcome these problems.

As noted in the preface of the paper mentioned above,

the focus can be placed on obtaining general algorithms that can be combined with diverse data representations.

Generic programming has many characteristics, but modern generic programming centers on the following three.

1. Type Independence: Not bound to a specific data type

2. Code Reusability: The same algorithm can be applied to different types

3. Efficiency: Generates optimized code at compile time with no runtime overhead.

STL, at least for me, represents the only way programming is possible. It is, indeed, quite different from C++ programming as it was presented and still is presented in most textbooks. But, you see, I was not trying to program in C++, I was trying to find the right way to deal with software. I have been searching for a language in which I could express what I wanted to say for a long time. In other words, I know what I want to say. I can say it in C++, I can say it in Ada, I can say it in Scheme. I adapt myself to the language, but the essence of what I am trying to say is language independent. So far, C++ is the best language I’ve discovered to say what I want to say. It is not the ideal medium, but I can do more in it than in any other language I tried. It is actually my hope that someday there will be a language designed specifically with generic programming in mind.

-Alexander A.Stepanov

Generic programming was studied from the late 1970s onward, starting with Ada, passing through Scheme, and eventually settling in C++,

where Alexander Stepanov

created the library STL to realize his philosophy that algorithms should be independent of data structures.

It is somewhat darkly comic when you consider that C++ itself originated as an effort to apply OOP to C.

Encapsulation, a core concept of OOP, is the combination of data and behavior, yet STL devotes its energy to separating that behavior from data.

It is quite fascinating that a foundational library embodying a philosophy different from OOP was born in a language created for OOP.

(Of course, depending on how you look at it, the two philosophies are not diametrically opposed, so this outcome was entirely plausible.)

The fact that generic programming actually emerged in opposition to OOP is something that

Alexander Stepanov, the creator of the C++ Standard Template Library (STL), himself stated.

Yes. STL is not object oriented. I think that object orientedness is almost as much of a hoax as Artificial Intelligence. I have yet to see an interesting piece of code that comes from these OO people. In a sense, I am unfair to AI: I learned a lot of stuff from the MIT AI Lab crowd, they have done some really fundamental work: Bill Gosper’s HAKMEM is one of the best things for a programmer to read. AI might not have had a serious foundation, but it produced Gosper and Stallman (Emacs), Moses (Macsyma) and Sussman (Scheme, together with Guy Steele). I find OOP technically unsound. It attempts to decompose the world in terms of interfaces that vary on a single type. To deal with the real problems you need multistoried algebras—families of interfaces that span multiple types. I find OOP philosophically unsound. It claims that everything is an object. Even if it is true it is not very interesting—saying that everything is an object is saying nothing at all. I find OOP methodologically wrong. It starts with classes. It is as if mathematicians would start with axioms. You do not start with axioms—you start with proofs. Only when you have found a bunch of related proofs, can you come up with axioms. You end with axioms. The same thing is true in programming: you have to start with interesting algorithms. Only when you understand them well, can you come up with an interface that will let them work.

-Alexander A.Stepanov

Alexander fiercely criticized OOP both technically and philosophically, and looking at the OOP concepts in modern languages, that criticism is understandable to a degree.

This is because modern languages such as C# and Java are designed so that everything inherits from 'object,' meaning every entity is viewed through the lens of an Object.

When that happens, the boundaries between objects become blurred, and a clear gap exists between theoretical OOP and its real-world implementation.

In fact, Stepanov's criticism of OOP is closer to the OOP of the early 1990s, which was largely centered on Inheritance. OOP did evolve from its early form,

and today it is conventional wisdom in modern architecture to avoid inheritance unless a clear is-a relationship exists. When you descend from design considerations to the low level, the reasons become even clearer.

OOP's Subtype Polymorphism forces runtime dynamic binding through a virtual table (vtable). This causes cache misses from memory pointer chasing, which inevitably introduces performance overhead at the machine-code level. By contrast, generic programming's Parametric Polymorphism fixes types at compile time and generates optimized code.

In conclusion, OOP is a paradigm that sacrifices hardware execution performance in exchange for runtime flexibility, while generics are a technical alternative that maximizes system efficiency by fixing types at compile time, and this is what lends weight to Stepanov's critique.

For those who might read this and wonder whether OOP is therefore unconditionally bad, let me add a clarification.

While Stepanov focuses on the 'type' problem of OOP, actual OOP programming generally focuses on 'relationships',

so it is fair to say that some of this may be a brilliant programmer's pride in his own discipline leading him to disparage a somewhat different philosophy.

Now that we know the history, let's take a look at how it is used.

Generic Programming in the Modern Era

There are several ways to classify generics.

Multiple approaches exist at a basic level, but let's just talk about how they are divided using the most commonly used classification.

Classification of Generic Implementation Strategies

Generics can be broadly divided into two categories based on when types are processed.

Static Generics

Types are fully resolved at compile time, and independent code is generated for each type. At runtime, the concept of generics itself does not exist; the code has already been transformed into concrete, type-specific code.

Implementation strategy: Monomorphization

template <typename T>

T Add(T a, T b) { return a + b; }

int main()

{

int sum = Add(3, 5); // Compiler generates `Add<int>`

double d = Add(1.0, 2.0); // Compiler generates `Add<double>` separately

}The compiler stamps out a separate function or class for each type used. Add<int> and Add<double> are completely different functions in the binary. There is no runtime overhead and optimization is maximized, but the binary size can increase because code is duplicated for every type used.

Representative languages: C++ templates, Rust, Swift

Dynamic Generics

Types are checked at compile time, but the generic structure is preserved at runtime as well. Rather than duplicating code for each type, a single piece of code handles multiple types. However, depending on how type information is managed at runtime, this splits into two strategies.

(a) Type Erasure — Java

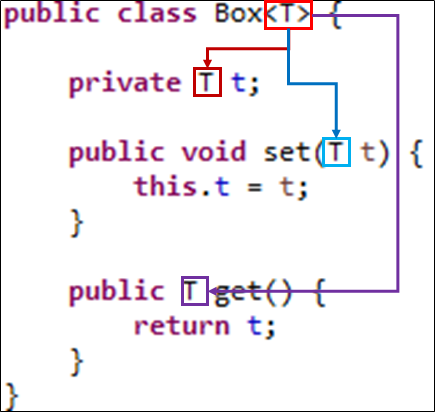

public class Box<T> {

private T value;

public void set(T value) { this.value = value; }

public T get() { return value; }

}

Box<Integer> intBox = new Box<>();

intBox.set(10);

int value = intBox.get(); //Enforced as `Integer` at compile timeAfter checking types at compile time, type information is erased at runtime. The code above is converted to Box<Object> after compilation, and a cast is inserted whenever get() is called. Only one bytecode exists, so there is no impact on binary size, but at runtime it is impossible to tell whether something is a Box<Integer> or a Box<String>.

(b) Reification — C#

public class Box<T>

{

private T value;

public void Set(T value) { this.value = value; }

public T Get() { return value; }

}

var intBox = new Box<int>();

Console.WriteLine(intBox.GetType()); // System.Box`1[System.Int32]In C#, generic type information is preserved even at runtime. The types remain in the IL as-is, and the JIT compiler generates separate native code for value types (int, float) in a manner similar to monomorphization, while sharing code for reference types (string, object). This avoids the limitations of type erasure while also gaining some performance benefits.

From Type Parameters to Constrained Generics

The basic unit of generics is the type parameter (T, K, V). Every generic language shares this approach. The difference lies in how strictly constraints are applied to these type parameters. (As for why T, K, and V are used: they are taken from the first letters of Type, Key, Value, Element, and Result, abbreviations that have become established conventions.)

(a) Unconstrained Type Parameters

template <typename T>

class Box

{

T value;

public:

void set(T v) { value = v; }

T get() { return value; }

};Any type can be passed in as T. The compiler only checks whether T supports a given operation at the point of instantiation. If it does not, an error occurs at that point, and the error message grows extremely long as it unwinds the template instantiation chain, which is a chronic problem with the unconstrained approach.

Representatives: C++ templates (pre-C++20), Java generics, C# generics

(b) Constrained Type Parameters

template <typename T>

concept Addable = requires(T a, T b) { a + b; };

template <Addable T>

T Add(T a, T b) { return a + b; }A constraint is explicitly stated on the type parameter: "this type must support at least these operations." If a type that does not satisfy Addable is passed in, a clear error is produced before instantiation. This structurally resolves the error-message hell of the unconstrained approach.

Representatives: C++20 Concepts, Rust Trait Bounds, Swift Protocol Generics, C# where T : constraints. Note that C# generic constraints such as where T : IComparable<T> also fall into this category. They are not as expressive as Concepts, but they belong to the same family in that they constrain type parameters through interfaces.

Generic programming subsequently finds application in Template Metaprogramming,

template <int N>

struct Factorial {

static constexpr int value = N * Factorial<N - 1>::value;

};

template <>

struct Factorial<0> {

static constexpr int value = 1;

};

int main() {

constexpr int result = Factorial<5>::value; //120

}where, if generic programming focuses on generalizing types, template metaprogramming aims at compile-time computation itself.

The key is that templates are used like a programming language, allowing a wide variety of programs to be built inside them.

In other words, it is an approach born out of generic programming in pursuit of performance.

Looking only at what has been covered so far, generics might seem like a silver bullet that extracts algorithms and applies them to everything with ease.

In practice, however, this is a theoretical perspective, and real-world application draws considerable criticism.

Generic programming seems to have only advantages,

but does it have no downsides?

template <typename InputIt, typename OutputIt, typename UnaryOp>

OutputIt transform(InputIt first, InputIt last, OutputIt d_first, UnaryOp unary_op);Let's look at one example.

The C++ std::transform function applies a unary operator (unary_op) to each element in the input range [first, last], outputs the results, and stores them via an iterator (d_first).

This code is very simple, but when something goes wrong, it starts spewing long error messages.

When a problem occurs in template code, the compiler climbs up through the abstraction layers to analyze it, generating complex error messages in the process that make it difficult for the programmer to find the mistake.

In the familiar 'LEGO assembly' style of programming (grabbing wrapped method blocks and composing them), mistakes are easy to spot at a glance, but template instantiation failures are genuinely hard to debug.

Also, Java's generic approach of Type Erasure

may look as though the compiler is checking types, but at runtime the type information is erased, which can cause unexpected errors.

(C# preserves generic type information even at runtime (Reification); concrete generic types are retained in the IL so type information can be verified at runtime, making this a Java-specific problem.)

And generic programming with type parameters is notoriously difficult to read.

In the end,

it may be superior and elegant in theory, but in practice it can be said to put programmers through hell.

Generic programming can truly enable beautiful programming,

and through it, an enormous amount of software engineering 'wealth' can be accumulated.

But if used incorrectly, you may fail to recognize the abstraction 'debt' hidden inside generic programming,

and you must understand that a great depression where programming becomes hell can follow.

Ultimately, the ability to weigh all of this and strike the right balance is what defines a programmer's skill.